What will the next format be to usher in a new music industry, like the record did in the 20th century?

The 20th century saw the rise of consumerist culture as a response to mass production causing supply to outgrow consumer demand. An example of this phenomenon is 20th century fashion which became highly cyclical (and wasteful), marketing new clothes for every season. After World War II, it became common to use clothing to express oneself through styles and fashions which often went hand-in-hand with music subcultures, just think of hippies, skinheads and punk music, hiphop, funk, or disco.

“Our enormously productive economy demands that we make consumption our way of life, that we convert the buying and use of goods into rituals, that we seek our spiritual satisfaction and our ego satisfaction in consumption. We need things consumed, burned up, worn out, replaced and discarded at an ever-increasing rate.”

– Victor Lebow (Journal of Retailing, Spring 1955)

Consumerism helped turn the recording industry into the most powerful part of the music business ecosystem, something which had previously been dominated by publishers. It changed music. The record player moved into the living room, then every room of the house, and the walkman (now smartphone) put music into every pocket. Music gained and lost qualities along the way.

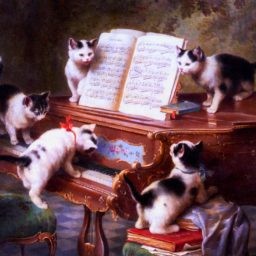

Previously, it had been common for middle class families to have a piano in the home. Music was a social activity; music was alive. If you wanted to hear your favourite song, it would sound slightly different every time. With the recording, music became static and sounded the same way every time. And the shared songs of our culture were displaced by corporate-controlled pop music. People stopped playing the piano; and creators and ‘consumers’ became more clearly distinguished culturally.

With streaming, we are reaching the final stage of this development. Have a look at the above Victor Lebow quote and tell me streaming does not contribute to music being worn out, replaced and discarded at an ever-increasing rate.

The rules of mass production don’t apply to music anymore, since it’s no longer about pressing recordings: anything can be copied & distributed infinitely on the web. The democratisation of music production has turned many ‘consumers’ into creators again. Perhaps this started with drum computers, which helped kick off two of today’s fastest growing genres in the 70s and 80s: hiphop and house music. Today, this democratisation has turned our smartphones into music studios, with producers of worldwide hits making songs on their iPhones.

We see more people producing music, our Soundcloud feeds are constantly updated, Spotify‘s algorithms send new music out to us through daily mixes, Discover Weekly, Release Radar, Fresh Finds, and we now have the global phenomenon of New Music Fridays. With this massive amount of new music, we are simply not connecting to music in the same way as we did when music was scarce. We move on faster. As a result, music services, music providers essentially, place a big emphasis on music discovery as a result. We shift from the age of mass media, and mass production, to something more complex: many-to-many, and decentralised (music) production on a massive scale.

Has consumerism broken music culture? I don’t think so. As a matter of fact, consumerism is also what producers of music creation software and hardware depend on, which contributes to the democratisation of music and returning musical participation to the days of the piano as the default music playback device.

If streaming is the final stage of the age of the recording, then what’s next?

Embedded deep in the cultures of hiphop and house music, we can see what cultural values are important to the age of democratised music creation. Both genres heavily sampled disco and funk early on in their lifecycles. One of the most famous samples in hiphop and electronic music culture is the Amen Break. With the advent of the sampler, the drum break of the Winston‘s Amen Brother became widespread and instrumental to the birth and development of subgenres of electronic music in the 90s.

Not so long ago, ‘remix culture’ was still a notion one could discuss in abstract terms, for instance in the open-source documentary RiP!: A Remix Manifesto which discussed the topic at length. Things have changed fast however, turning the formerly abstract into a daily reality for many.

Since the documentary’s release in 2008, social networks have boomed. Back then, only 24% of the US population was active on social media, but now that’s ~80%. With the increasing socialisation of the web, as well as it being easier to manipulate images, we saw an explosion of internet memes, typically in the form of image macros which can be adjusted to fit new contexts or messages.

The same is happening to music through ‘Soundcloud culture’. Genres are born fast through remix, and people iterate on new ideas rapidly. A recent example of such a genre is moombahton which is now one of the driving sounds behind today’s pop music.

Snapchat filters and apps like Musically let users playing around with music and placing ourselves in the context of the song. Teens nowadays are not discovering music by some big budget music video broadcasted to them on MTV, they are discovering it by seeing their friend dance to it on Musically.

Music is becoming interactive, and adaptable to context.

Matching consumer trends and expectations with technology

Perhaps music is one of the first fields in which consumerist culture has hit a dead end, making it necessary for it to evolve to something beyond itself. People increasingly expect interactivity, since expressing yourself just by the music you listen to is not enough anymore to express identity.

Music production is getting easier. If combined with internet meme culture, it makes sense for people to use music for jokes or to make connections by making pop culture references through sampling. Vaporwave is a great example. But also internet rave things like this:

Instead of subcultures uniting behind bands and icons, they can now participate in setting the sound of its genre, creating a more customised type of sound that is more personally relevant to the listener and creator.

Artificial intelligence will make it even easier to quickly create music and remixes. Augmented reality, heavily emphasized in Apple’s latest product release, is basically remix as a medium. When AI, augmented reality, and the internet of things converge, our changing media culture will speed up to form new types of contexts for music.

That’s where the future of music lies. Not in the static recording, but in the adaptive. The recording industry that rose from the record looked nothing like the publishing industry. It latched on to the trend of consumerism and created a music industry of a scale never seen before. Now that we’ve reached peak-consumerism, and are at the final phase of the cycle for the static recording, there’s room for something new and adaptive. And like with the recording business before, the music business that will rise from adaptive media will look nothing like the current music industry.

So, in dealing with this new media reality, you go to where the audience is. Apparently that’s no longer in app stores, but on social networks and messaging apps. Some of the latter, and most prominently Facebook Messenger, allow for people to build chatbots, which are basically apps inside the messenger.

So, in dealing with this new media reality, you go to where the audience is. Apparently that’s no longer in app stores, but on social networks and messaging apps. Some of the latter, and most prominently Facebook Messenger, allow for people to build chatbots, which are basically apps inside the messenger.